Conversational News

Conversational News

The increasing prevalence of smart speakers in regular households combined with their ease of use and immediate availability make them a excellent medium for news consumption: for example, while at the breakfast table, while cooking, or while doing small side-tasks. In such cases, the ability to consume the news hands-free and without looking at a screen is the most important advantage of smart speakers over other media. This advantage relies on the possibility to access all relevant information by talking to the system in natural and thus intuitive language, which makes for an engaging user experience. For this reason, big news publishers like the New York Times or CNN already produce daily flash briefings just for such devices. However, producing such briefings is too costly for small publishers. This project therefore laid the foundations for a system that enables even small publishers to publish their written news articles on smart speakers in an engaging manner.

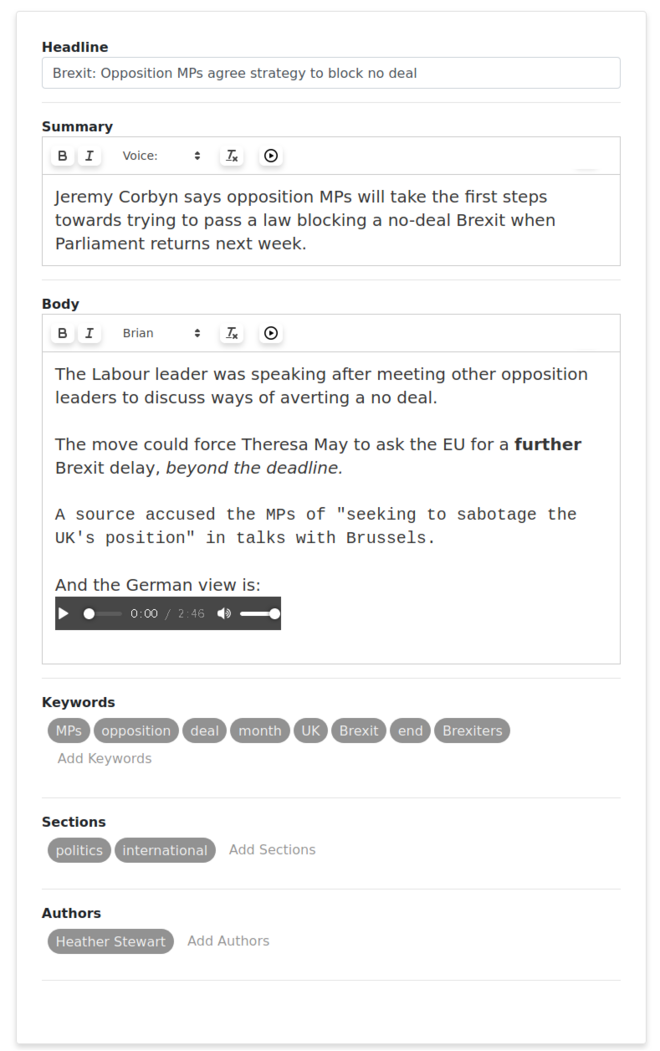

In this project, we developed a system that contains both a web-interface for the journalist to write (or paste in) their news articles, as well as an Alexa-powered smart speaker interface for news consumers to query for and listen to the written articles. The journalist is able to mark spans of text for being read out with emphasis through common visual metaphors like italics or bold face. Furthermore, pauses can be specified through paragraph breaks, and different voices can be selected through different fonts. The server then analyzes the article for keywords and estimates the time it takes too read the article aloud: if the summary or headline take too long, the journalist is warned appropriately, as long titles can frustrate the news consumer in a voice-interface much more than in a display-based one. The news consumer can then start our Alexa skill and query for news by title or keyword. They then hear the list of relevant news articles and select from this list using intuitive language (e.g., "the second one").

In this project, the students employed core web-technologies to build the server and the two connected interfaces. They used HTML, CSS, JavaScript and JSON for the journalist interface, XSLT and Servlets for the server, and the Amazon Alexa Source Developer Kit for communication with the Amazon cloud that connects to the Amazon Echo devices. Furthermore, they use the Natural Language Processing framework UIMA to prepare for more sophisticated analyses of the journalists' writings. This project is in further development as part of the Google Digital News Initiative in cooperation with the Big Data Analytics Group at the Martin Luther University Halle-Wittenberg.

Supervision: Roxanne El-Baff, Johannes Kiesel, Benno Stein

Students: Feras Al Sabaa, Xiaoni Cai, Lucky Chandrautama, Henrik Leisdon, Larisa Sorokina

The audio below contains a short demonstration, in which the demo article that is shown in the screenshot is read out aloud.