The code for our upcoming CVPR 2026 publication "KαLOS finds Consensus: A Meta-Algorithm for Evaluating Inter-Annotator Agreement in Complex Vision Tasks" is now available.

As part of the VarInSightAI project, this toolkit provides a standardized framework to quantify annotation consistency and identify label noise in complex computer vision datasets. The paper will be presented at the IEEE/CVF Computer Vision and Pattern Recognition Conference (CVPR) in Denver in June 2026.

Description

Visual inspection plays a central role in structural inspection, especially in the analysis of damage to critical infrastructure. However, annotation variations - deviations, uncertainties and sometimes even errors in the annotations - pose a major challenge for machine learning as they significantly affect model performance. This problem is not limited to structural inspection, but also affects many other application areas where visual data is processed.

Aim and approach

The project addresses various aspects of dealing with annotation variation (AV):

- Identification, quantification, impact analysis and correction of AV

- Developing new methods for dealing with AV during model training

- Creation of practical evaluation metrics that take into account the effects of AV

In addition, a damage detection dataset with real-world AV will be curated to advance the research and provide a foundation for future work.

Software

The results and tools developed in the project will be published as freely available software. The FiftyOne plugin Multi-Annotator Toolkit already supports the identification, quantification and impact analysis of AV.

Funding

The project is funded by the German Research Foundation (DFG) with 381T €.

Project duration: 05/2025 - 04/2028

Project related publications

- C. Benz and V. Rodehorst: CrackStructures and CrackEnsembles - The Power of Multi- View for 2.5D Crack Detection, IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Tucson, Arizona, 2025.

- D. Tschirschwitz and V. Rodehorst: Label Convergence - Defining an Upper Performance Bound in Object Recognition through Contradictory Annotations, IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Tucson, Arizona, 2025.

- C. Benz and V. Rodehorst: ENSTRECT - A Stage-based Approach to 2.5D Structural Damage Detection, European Conference on Computer Vision (ECCV) 2. Workshop on Vision-based InduStrial InspectiON (VISION), Mailand, Italien, 2024.

- C. Benz and V. Rodehorst. „MVCrackViT: Robust Multi-View Crack Detection for Point Cloud Segmentation using View Attention“. In: Proc. IEEE Int. Conf. on Image Processing (ICIP), 2024.

- C. Benz and V. Rodehorst. „OmniCrack30k: A Benchmark for Crack Segmentation and the Reasonable Effectiveness of Transfer Learning“. In: Proc. IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR) Workshops, 2024.

- J. Flotzinger, P. J. Rösch, C. Benz, M. Ahmad, M. Cankaya, H. Mayer, V. Rodehorst, N. Oswald and T. Braml. „Dacl-Challenge: Semantic Segmentation During Visual Bridge Inspections“. In: Proc. IEEE/CVF Winter Conf. on Applications of Computer Vision (WACV) Workshops, 2024.

- D. Tschirschwitz, C. Benz, M. Florek, H. Noerderhus, B. Stein and V. Rodehorst. „Drawing the Same Bounding Box Twice? Coping Noisy Annotations in Object Detection with Repeated Labels“. In: Proc. German Conf. on Pattern Recognition (GCPR), 2023.

- C. Benz and V. Rodehorst. „Image-Based Detection of Structural Defects Using Hierarchical Multi-scale Attention“. In: Proc. German Conf. on Pattern Recognition (GCPR), 2022.

- D. Tschirschwitz, F. Klemstein, B. Stein and V. Rodehorst. „A Dataset for Analyzing Complex Document Layouts in the Digital Humanities and its Evaluation with Krippendorff ’s Alpha“. In: Proc. German Conf. on Pattern Recogntion (GCPR), 2022.

- C. Benz, P. Debus, H. K. Ha and V. Rodehorst. „Crack Segmentation on UAS-based Imagery using Transfer Learning“. In: Proc. Int. Conf. on Image and Vision Computing New Zealand (IVCNZ), 2019.

Label variations exist in all datasets, but they often remain hidden in those containing only one annotation per image. While reducing these variations is a direct way to mitigate the issues caused by "noisy labels," it is first necessary to identify whether these are structural disagreements or individual errors.

Within this project, we developed the KαLOS (KaLOS) toolkit to rigorously evaluate dataset quality. The tool provides granular diagnostics to identify:

- Hard images and difficult classes for annotators.

- Collaboration clusters and "schools of thought".

- Annotator vitality and individual rater consistency.

KαLOS is designed for use during both the creation and post-hoc assessment of datasets. It is versatile enough to evaluate human labels, semi-automated proposals, and fully automated annotations.

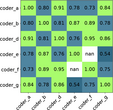

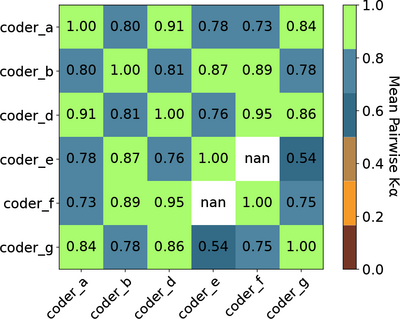

Figure: Collaboration cluster analysis on the TexBiG dataset, visualizing agreement levels between raters. "NaN" values indicate raters who did not share any overlapping tasks.

KαLOS code can be found on GitHub.

KαLOS will be presented at CVPR in Denver in June 2026 as a main track paper.

[1] Tschirschwitz, D., and Rodehorst, V: KαLOS finds Consensus: A Meta-Algorithm for

Evaluating Inter-Annotator Agreement in Complex Vision Tasks . IEEE/CVF Computer Vision

and Pattern Recognition Conference (CVPR), Denver, Colorado, 2026. [arXiv]