No edit summary |

No edit summary |

||

| (4 intermediate revisions by 2 users not shown) | |||

| Line 159: | Line 159: | ||

==Downloads== | ==Downloads== | ||

*SketchUp Model: [[Media:ModelWedges.zip]] | * SketchUp Model: [[Media:ModelWedges.zip]] (SketchUp required) | ||

* | * Pd Patches and MainStage Synthesizer: [[Media:Catleen Microscopy Pd Patches.zip]] (Pure Data and Mainstage required) | ||

==Links== | ==Links== | ||

You can also find the full documentation on my | You can also find the full documentation on my [http://www.cathleengoering.de web portfolio]. Cathleen Göring. | ||

http://www. | |||

Latest revision as of 09:55, 17 May 2013

Miroscopy Stage Construction

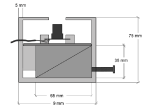

Approach with 2 wedges. The aim was to find a simple mechanism that allows for high precision. If you tighten the screw the lower wedge moves left and pushes up the upper wedge with the camera on top of it. If you loosen the screw the camera moves down. Because of its simplicity it would be less susceptible to errors.

3D Model

Preparing the Camera

First remove the lens of the camera. Use a screwdriver to unscrew the outer parts. Then use a hand saw or a pair of nippers to crack open the case around the cable. Carefully remove the plastic without damaging the cable. As a result you get the PCB with the cable and the LEDs. For now leave the black plastic ring on the PCB as a protection for the sensor.

The second step is to remove the LEDs. Unsolder them carefully and clean the solder holes with a desoldering pump.

Cardboard Model

Copper Model

After checking and improving the functionality of the design with the cardboard construction the final model can be built. Here the base material for conductor boards is used. It is made out of epoxy with a copper surface. This makes the material easy to work on. The smooth surface of the copper allows for a smooth movement of the wedges. The design was expanded by two small springs that constantly pull down the camera platform, preventing the platform to be pushed up by the firm cables of the camera. What you need is:

- conductor board material

- threaded bolt

- plastic knob

- soldering rod

- solder

- two small extention springs

- small metall clips to keep the threaded bolt in place

- ribbon cable

- tiny screws

- plastic spacers to fixate the camera in the right place

- fretsaw

- rasp

- sanding paper

- drill

The conductor board material can be easily sawn with a fret saw. Any sharp edges can be removed with rasps and sanding paper. The parts are assembled by soldering them together, also a nut is soldered on the inside of one of the wedges. The threaded bold is fixated to the box with small clamps to keep it steady but turnable at the same time. It reaches through the outer wall of the box into the nut within the wedge and thereby provides the mechanism for the wedge movement.

Microscopic Images

Calculating Camera Resolution

An iPad with 52ppcm (pixel per cm) was used to calculate the camera resolution. This means that one pixel is 10/52 mm wide, that is roughly 0,2 mm. These 0,2 mm equal 300 pixels of the camera image. Therefore one pixel of our camera shows 0,00067 mm (0,2mm/300) of the specimen. Knowing that the image is 640 pixels wide and 480 pixels high, the image size adds up to 0,43 mm in width (0,00067*640) and 0,32 mm in height (0,00067*480).

Artemia Salina

With the objective of finding interesting material to examine Artemia Salina were breeded. Artemia Salina is a very old species of brine shrimp. Breeding sets can be found in the pet shop and have been a gimmick in childrens science magazines on a regular basis. Unfortunately even the newly hatched shrimps called nauplii are to big to be examined thoroughly with the camera.

<videoflash type=vimeo>62867012|400|260</videoflash>

Hay infusion

Another possibility to create specimen to look at with a microscope is the hay infusion. Collect a handfull of hay and dry leaves and about 500 ml of pond water. Put the hay in a glass bowl and pour the water on it. Keep your hay infusion in a bright place at room temperature. After a few days you can find protozoa like Paramecium, Amoebia or Euglena. The hay infusion results in different protozoa depending on the material that is used. Possible materials are hay, dry leaves or salad that wasn't treated with pesticides.

After 5 days at room temperature a lot of bacteria and also quite a lot of protozoa have developed.

<videoflash type=vimeo>62992002|400|260</videoflash>

<videoflash type=vimeo>62993903|400|300</videoflash>

Clotting Blood

This is a fast motion video of clotting blood. The video's speed was increased by about 700%. The real time clotting took almost 5 minutes.

<videoflash type=vimeo>62994294|400|300</videoflash>

Guess what

<videoflash type=vimeo>54253797|400|300</videoflash>

Artistic approach

The aim was to create sounds to the microscopic pictures that are distinct and reflect the processed video input. The first step was to find parameters to extract directly from the picture and to transform them info sound in a logical way. To achieve the transformation into sound this the visual programming language Pure Data and the software Mainstage can be used.

Parameters such as

- brightness

- contrast

- histogram

- movement

- colour

were considered.

There were however some restrictions because of the quality of the image. The idea of using colour for example had to be dismissed because of the automatic white balance of the camera.

In the end three different parameters were chosen: the overall brightness of the image, the histogram of the image, which changes over the period of a video and the movement of individuals inside the picture.

Brightness

The parameter brightness was used to create an atmospheric sound that does not undergo any sudden changes. Long lasting notes with echo were chosen to create a general atmosphere. <videoflash type=vimeo>62855963|520|270</videoflash> The software takes the RGB values of the picture, adds them and divides them by 3 which gives the general brightness of the picture. This value is transformed to a midi compatible value to create a sound using a synthesizer software.

Histogram

The values of the histogram of the pictures are taken and transformed into notes. This sequence is replayed over and over again. Just by listening to the histogram sound it is possible to tell if the picture has a consistent brightness distribution of if there are outliers which represent as spikes in the histogram.

The histogram sound constributes to the general atmosphere by adding a sense of strangeness and oddness. <videoflash type=vimeo>62856047|520|230</videoflash>

Combination

The following examples combine the parameters brightness and histogram.

Video stills: <videoflash type=vimeo>56874082|520|210</videoflash> <videoflash type=vimeo>56874080|520|210</videoflash>

Video: <videoflash type=vimeo>56874349|520|210</videoflash>

Movement

By defining whether there is life in the picture or not, movement is a very important parameter to create an authentic sound but also a very tricky one. The aim was to provide the possibilty to tell something about the intensity and direction of the movement just by listening to the sound.

This video only shows the movement parameter: <videoflash type=vimeo>62856465|400|300</videoflash>

The y coordinate is the parameter which controls the pitch of the tine, that means, if the creature moves up the pitch rises, if the creature moves down the pitch also falls.

The x coordinate controls how fast the notes are played. If the creature moves to the left the sound is calmer and has a low velocity, the more right the creature moves, the faster the sound gets.

There are certain limitations. The detection is not completely accurate, the cross hair jumps up and down within the creature. It is however possible to tell something about the kind of movement that is performed in the picture.

Combination

The following example combines all three parameters: <videoflash type=vimeo> 62856497|400|300</videoflash>

The tracking works well for videos with only one moving object. The problem of jumping cross hairs is increased when there are more moving objects but although the sound does not reflect the direction of movement anymore, it reflects the intensity of movement. <videoflash type=vimeo> 62856099|400|300</videoflash>

End Result

By combining all three parameters a sound atmosphere is generated that is directly linked to the images seen. Altogether it creates a feeling of a microscopic word that is, although it is part of our every day life, a world completely foreign to us. The foreignness already immanent in the picture is intensified through the sound.

Furthermore the approach can be seen as a possibility to perceive something within the picture that is not directly visible to the eye. A slight change in the brightness of the picture for example can be heard much easier than seen. This way a further development of the approach could be used to help perceiving microscopic images, possibly even in a scientific way. <videoflash type=vimeo> 62856510|400|300</videoflash>

Downloads

- SketchUp Model: Media:ModelWedges.zip (SketchUp required)

- Pd Patches and MainStage Synthesizer: Media:Catleen Microscopy Pd Patches.zip (Pure Data and Mainstage required)

Links

You can also find the full documentation on my web portfolio. Cathleen Göring.