A research on integrating Machine Learning and Max/MSP on the development of interactive audiovisual systems

The project consisted on a research regarding the connection and possible applications of Machine Learning in Max/MSP/Jitter, as well as the development of an environment inside of Max/MSP that could demonstrate and prototype such application. The final stage of the development consisted on getting sensor signal, in the case an Arduino, to receive physiological and potential environmental signal, and conduct it through a stochastic sound generation method in Max/MSP which the reactions were determined by Machine Learning.

The research aimed to develop a prototype on possible applications of such techniques in the development of musical instruments controlled by or sound generated in Max/MSP, interactive audio and/or video installations, amongst other possible developments. The main interest in using Machine Learning as a creative tool integrated with Max consisted on the set of possibilities that open up with the training possibilities facilitated by it, and how such possibilities can offer creatives working with Max/MSP new ways of interpreting and manipulating data, and as a consequence, new potential creative opportunities.

Technical Development

Sound

Considering the aim of establishing a stable system between sensor, sounds synthesised on Max/MSP, its Machine Learning integration and feedback back to sound synthesis on Max (and potential Digital to Analog conversion of the same data into sensors and physical objects), the development of the sound component of the system limited itself to synthesis of sine waves.

Each sine wave was then processed through different stochastic methods in Max/MSP, namely the objects drunk and random, and effects to achieve timbral variation.

In the prototype, a step sequencer was created to control the activation of the oscillators, which creation was described. Deliberately, the function chosen to bridge Max/MSP and its training through Machine Learning was the gain sliders of each of the seven oscillators, but it must be highlighted that other aspects could easily have been used for such.

One of the tests developed throughout the prototype phase was the integration of recorded and live sound inputs through a microphone in the system, and its subsequent possible integrations and applications within the patch integration with machine learning. Although such exploration wasn’t extensively tested, the possibility of such connection and manipulation of sound input through training methods could be confirmed. For such, it was made necessary to process the sound input through audio analysis techniques - such as Fast Fourier Transformation (FFT) - in order to extract a greater amount of information from the source, which would also be the same information that could be processed through training methods.

Machine Learning

The interest of working with Machine Learning integrated to Max/MSP consisted on different aspects and practicalities, such as the own limited availability of resources on- and offline of such integration, limited knowledge on other training methods on other environments usually used for Machine Learning (such as Python and C++), and altogether the interest in the creative possibilities of working with such regardless of technical knowledge limitation on other environments. The integration of such possibilities within Max/MSP aimed, as a consequence, to expand the own creative and technical possibilities that it might offer as a tool integrated within Max/MSP.

For such, a third party software called Wekinator was used as main element for integration and training. It was developed by Dr. Rebecca Fiebrink, a senior lecturer in Computing at Goldsmiths University of London and member of the Embodied Audiovisual Interaction (EAVI) group of the university. The software, which first version was developed in 2012, aim is to democratise the possibilities of working with Machine Learning for creators, making it available for being used without knowledge in some of the other programming environments, which might be fairly more complex than Wekinator, which can be integrated to a varied array of Softwares already familiar to each creator. In this case, Max/MSP, but it could have been integrated to many other softwares that facilitate external integration - such as Processing, Supercollider, amongst others - as its connection for training takes place through Open Sound Control (OSC).

In the training of Machine Learning Algorithms, two different training possibilities are possible: Supervised and Unsupervised Learning. In short, Supervised Learning consists on algorithmically training that specifies an input and a correspondent output regarding a specific Data. That means that it’s possible to somehow determine what’s the supposed Data Output from a dataset training. On Unsupervised Learning, the training considers a determined Data Input, but no specific Output is determined. That means that the resulted output can consists on unexpected combined data sets. Each Training system can have better appliances for different environments and uses of Machine Learning for data processing.

Wekinator is based on Supervised Learning Algorithms - that means it works out on a training process that stipulates determined inputs and outputs. Through the setting up of the training system, which is called a Model, determined Inputs and Outputs are specified in between the software that’s being connected to Wekinator (in such case, Max/MSP), the Model (inside Wekinator), and the Output, which consists on the data after the training inside of Wekinator that can be sent back to the first program, or also some other specified program. As said, the connection of inputs and outputs works out through OSC, and that facilitates the data being sent to control either the same parameters of the input, as well as different parameters to be specified. In the determined developed system, the processed data after the Training is sent back to Max/MSP, and control the same parameters that were used as Inputs for the training process (gain sliders).

In such case, the two systems - Input and Output - were called Neurons, as direct reference to the own process of training that was used to the systems. It was also a reference to biological neural neurons, as the investigation into training processes was influenced by the comprehension on neural systems through the first intention of using an EEG (Electroencephalogram) as a sensor to control the input data on Max/MSP. In Machine Learning, one of the main possible training systems are the so-called Neural Networks, which themselves are inspired by the actual functioning of biological neural networks. In Artificial Machine Learning systems, Neural Networks consists on layers of data stored in so-called Neurons, which connect to each other through such layers of interconnected Neurons.

In short, the process of neural networks training functions through processing and reprocessing of the data in different layers, which eventually are outputted. On the different layers, each Neuron have a different weight, and the process of training works out in order to find better weight values for getting a determined output number for the data. The process of training involves, then, experimenting different inputs and training specifications, also in order to achieve a determined set of output that might be more interesting for the Model.

The brief explanation on some of the aspects just explained, for the work of Wekinator, in which the training is itself limited regarding the specifications on some of such aspects, such as weights, number of layers on Neural Network training, amongst others, can however be important. Although the software works as a black-box, that means, it’s not really possible to grasp everything that is happening on an algorithmically level, and for such gives the creator less autonomy on the training process and possible outputs, it although already offers the possibility of working with different types of Training Methods, such as Neural Networks and Linear or Polynomial Regression. For such, and in order to achieve bigger autonomy on the training processes despite such limitations, the comprehension of some key terms on Machine Learning can make itself necessary or at least helpful, namely: classifiers, Backpropagation, decision stumps, amongst others.

Overview on Training with Wekinator

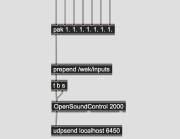

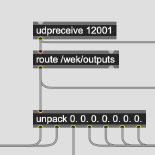

Considering the short incursion on generals on Machine Learning, and the decision on working with Wekinator considering the opportunities it offers, and its limitations, a short description on the connection between Max/MSP and Wekinator might be made necessary. Considering the last developed patch for the system, follows a brief step-by-step on the Inputs, Model Processing and training, and Output back to Max/MSP.

For such, with the installation of Wekinator, an extra software called WekiInputHelper is also installed. WekiInputHelper helps making sure that the inputs are successfully connected, and also helps monitoring the input and output signal and data. For working with WekiInputHelper, which is not compulsory, an extra OSC Control Port is supposed to be specified. The control port on WekiInputHelper will the listen the data sent through Max/MSP, and will be outputted to Wekinator through another Port (for an example, 6448 and 6449). On Wekinator, an output Port will also be determined (for an example, 12001), which is how the outputted data can be sent back to Max/MSP or another intended software.

Visual Guide on working with WekiInputHelper and Wekinator

Further instructions on working with Wekinator can be found here: General Instructions

Sensors

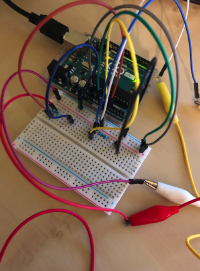

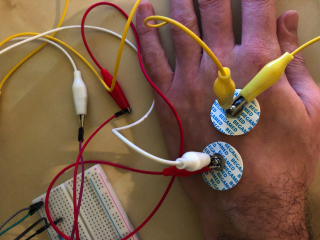

For the prototyping of the system and its subsequent sensor integration, EMG sensors were used to capture body signal and translate it into data signal to be processed on MAX/MSP through Arduino. In the example, two sensors control the input of audio gain on two of the developed oscillators on Max/MSP, which are subsequently trained on Wekinator, and sent back to Max/MSP.

Process & Method

The development of the system, subsequent research on Machine Learning and its possible use on instrument development and knowledge development on Wekinator took place through a work process that consisted on different stages, while in parallel of the development of the final patch - available on the patches section -, that connected the whole system (sensor data input, signal control on Max/MSP, input and output through Wekinator and Training Methods).

The early stages of conceptual development, research and experiment on working with an EEG as a data control sensor in Max/MSP, and so forth, are condensed on the following subpage.

One of the stages of implementation of the work with Machine Learning and Neural Network training on Max/MSP included an attempt on writing a Neural Network inside of Max/MSP, through the patching of a single neuron, and consequent development of a neuron layer. Such exploration was discontinued, as it was found to not be the most time-efficient matter for getting the same sort of expected outputs as the ones Wekinator proved to be possible.

Results

Sensor Signal Monitoring

The sensor signal input was seen as an important element on the prototype. Not as from the signal it was receiving from the EMG per se, as for deeper uses the own functioning of such sensors should be explored in depth in accordance to an specific project idea or briefing. But rather to comprove that the integration of the system with the physical world was possible and could be extended to any other possible sensor that could get physical information that could be translated into data into Max/MSP, and integrated with Wekinator for the use of Machine Learning in such integration on the already known possibilities on working with Max/MSP for Audio/Visual/Audiovisual interactive installations, audio programming for Sound Design, Music and Sound Installations, and Visual Programming with Jitter, possible integrations with Ableton Live through Max4Live, amongst other uncountable possibilities. The connection of sensor input data was, therefore, important to complete the cycle of physical world sensing possibility, data programming on Max/MSP, and the possibilities of using Machine Learning to increase possibilities of creating inside of the framework of Max/MSP.

In the prototype, the data of two EMG sensors were integrated into the control of parameters of two of the so-called developed oscillators, which are two of the seven inputs of data from Max/MSP into Wekinator, that then are sent back to Max/MSP after training. The signal of the sensors is detected through Arduino and conducted through alligator cables and patches.

Training Demonstration

Max/MSP Patches

Archive of the processual development of the patch.

1. First Experiment on Synth Building

2. Ongoing Experiment on building additive synthesis

3. Test on controlling additive synthesis with Arduino

6. From Additive Synthesis to Machine Learning

7. Experiment with Analog Audio Input and ML

8. Neurosynth feedback Max + ML

9. Arduino/sensor signal feedback with ML (first attempt)

10. Final Patch

Arduino Patch

References / Bibliographic References

Camastra, Francesco; Vinciarelli, Alessandro. Machine Learning for Audio, Image and Video Analysis. Theory and Applications, Second Edition, 2015.

- Guy Ben-Ary CellF Neural Synth

- David Tudor Article on The Neural Network Synthesizer

- David Tudor Neural Synthesis Nos. 6-9 (extract)

- Marcus Lyall On your Wavelength (2015)

- Prof. Mick Grierson Brain Computer Music Interface (2008)

- Google AI/Magenta Studio NSynth Super