Project Description

Full Artistic Statement in German as PDF download

Video Documentation (with screen recording)

Audio Samples

The complete Patch including download-, purchase- or information links to: - all externals used in the patch - all the hardware used in the patch and where to buy it (including audio output situation)

patch walkthrough and tutorial

Conclusion and final works

all the things stated above will be added here as soon as the project is finished

Introduction

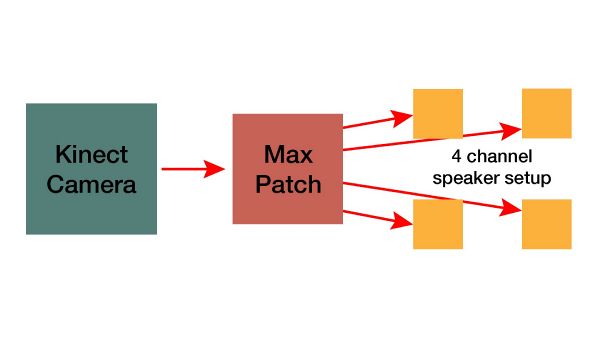

The goal of the semester project was to write a Max Patch for an interactive sound installation. The installation was about connecting the movement of an individual in a defined space or room to the acoustic environment in this certain area.

The main idea behind this action, was to give the person a certain amount of control over the parameters of sounds, that would be played and positioned in the room, without the person being aware of the interaction. This required an interface that wasn't visible or touchable from the beginning on, so the installation used video tracking, scanning the movement of the person in the room.

The data, that's gained from the video tracking is used to influence the parameters of the sound as well as the spatialisation of the sound, meaning its position in the room. This already offers quite a lot of potential to give the recipient a feeling of influence on his environment.

Artistic Statement

The whole process of planning, creating and building this installation happened during the Covid-19 Lockdown. So most of my life during this time was happening in my room. Working, spending free time, small projects, sleeping, feeling good and also feeling bad, it all happened in the same small, and closed space.

Also, the project is happening inside of a closed environement, a defined room, nothing that's happening outside of this microcosmos matters for the installation, only what's inside of it. So when building and composing the installation, it seemed obvious to me, that the installation must happen in my home.

This means, I composed for my own, personal private space, connecting the sounds to the different space inside of this room and my feelings and situation in these places. The working area, my desk, my bed, my TV, my windows to the outside world. All these different places where given a meaning in the installation, with me, the recipient, as a moving, exploring and interacting person inside of this environment.

This makes the composition very personal and emotional, as it embraces my feelings and emotions during the Covid-19 lockdown.

The Setup

To be independent from the light condition in the room, an infrared camera was used. The most practical, and especially cheapest way to do this was using a Kinect 360 camera.

In order to position the sounds in the room and change these positions, it was necessary to have a setup of multiple speakers. In this case, a surround speaker setup of four speakers was used.

Video Documentation

Right here will be a video of the room, showing me in the finished and running installation. The audio signal will be explained, recorded and converted to stereo. Like this the audio isn't spatialized, but can be heard at least.

contents in the video

- showing the setup - running the final installation - explaining the sound and parameter changes

Patch Walkthrough and Setup Tutorial

Resources

HARDWARE

Kinect 360 sensor https://www.amazon.de/Microsoft-LPF-00057-Xbox-Kinect-Sensor/dp/B009SJAIP6 (pretty expensive... but you can easily get used ones for less than 40€ on ebay-kleinanzeigen.de)

Kinect 360 Adapter https://www.amazon.de/gp/product/B008OAVS3Q/ref=ppx_yo_dt_b_asin_title_o04_s00?ie=UTF8&psc=1

To output audio to multiple external speakers, you'll most likely need a

SOFTWARE

ICST Ambisonics Plugins free download https://www.zhdk.ch/forschung/icst/icst-ambisonics-plugins-7870

TUTORIALS

Color Tracking in Max MSP https://www.youtube.com/watch?v=t0OncCG4hMw&list=PLG-tSxIO2Jkjj0BthZ_y0GRkWRjAvL1Uo&index=4&t=310s (Part 1 of 3)

Kinect Input and normalisation https://www.youtube.com/watch?v=ro3OwWnjfDk&list=PLG-tSxIO2Jkjj0BthZ_y0GRkWRjAvL1Uo&index=5

Conclusion and future works

The project so far is finished and came to an end. Yet, the patch, and the installation context will be developed further, as there is a huge potential in this instrument.

This far, the control worked just by changing the position values. It's planned to add for exemple blob tracking to the patch, to control parameters by a certain movement, no matter where in the room it may happen. This would make the whole sound sculpture more diverse and flexible.

A huge and very important addition, that may happen during the next semester, is a visual dimension. This means the sensor data will also be used to control the for example light environment in the room. That's why it's important not to use a simple camera, but a Kinect360 with an infrared camera.